Ever since the death of the analogue CRT and the introduction of digital displays, there’s always been the potential to drive a digital display at its non-native resolution.

Because a digital display’s pixel grid is fixed and can’t change depending on the input signal, unlike the more analog system of phosphors excited on a CRT by its electron gun, it’s always showing you those fixed pixels no matter what.

On a modern digital display, it’s common to just always render at the same resolution as your display, be that your operating system’s desktop environment or a game, which takes away any question about scaling. Easy.

Any inability for your system to do that will always add scaling to the display back into the equation, and there are two broad underlying reasons why in most cases. The first one is performance. The GPU may not be able to render your game at the native resolution of your display at a high enough frame rate for you to enjoy it, demanding a lower-resolution, scaled-up render.

The second one is because the hardware or software doing the rendering simply can’t output at your display’s native resolution in the first place. An example is an emulator for an older system that supports resolutions well below your display, because higher resolutions weren’t available at the time. In both cases, you need to take output images that aren’t the same resolution as your display and make them fit to your display.

Maintaining performance and image quality

There are essentially infinite ways to scale an image from one resolution to another, up or down, but any desirable and practical methods have two key properties: performance and image quality. You need to be able to scale the image efficiently and quickly, whether it’s performed in software or hardware, and it ideally has to look good at the end.

A scaler without performance quickly becomes useless for real-time uses such as games, and one without a particular level of image quality will quickly be rejected as ugly, especially if it affects the spirit of the original content.

When thinking about scaling up the output of old systems, the scaler needs to remember that they were originally connected to low-resolution analogue CRTs. The image on those monitors had a particular look about them, and if you take it away, you remove some of the nostalgia hit you’ll get when playing those old systems via software emulation, or via a physical scaler that lets you display the original analogue output on your digital monitor.

Depending on the CRT technology in play, you could easily see individual RGB phosphor patterns and the phosphor masks, and if you were lucky enough to have a Sony Trinitron monitor, you could see the tiny tungsten phosphor grille support wires. Those visual aspects of the display technology of CRTs are now burned into the brains of people old enough to have experienced them almost as much as the content of the games played.

High-density modern displays

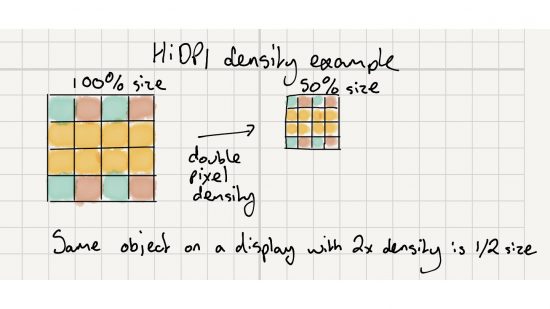

Outside of the case for upscaling old games, there’s another class of scaling problem that’s worth considering, which is drawing a desktop on a high-density display that packs in a serious amount of pixels per inch (ppi). These HiDPI displays put a huge amount of pixels in front of you, to the point where your visual system finds it hard to distinguish between pixels at sensible viewing distances.

Historically, operating systems had their user interfaces (UI) designed for displays and printing systems with 72 pixels per inch (ppi), so if you ask them to render UI elements at the native resolution of a HiDPI display then they’ll appear too small.

It’s easy to imagine why. I’m currently using a 27in 4K display with a pixel density of 163ppi, so an object that’s 163 pixels wide will appear to be roughly an inch wide on the screen. That same 163-pixel wide object on an 82dpi display will appear roughly 2in wide. Density matters, so an operating system needs to treat the UI differently on HiDPI displays.

That means your operating system needs to offer a method of rendering your desktop UI in a scaled way on HiDPI displays, so it looks right. Some operating systems do an almost flawless job at it, particularly macOS. To be fair to Windows specifically, though, it’s a very hard problem to solve on an operating system that must run legacy software designed from the days when HiDPI display technology hadn’t even been invented yet.

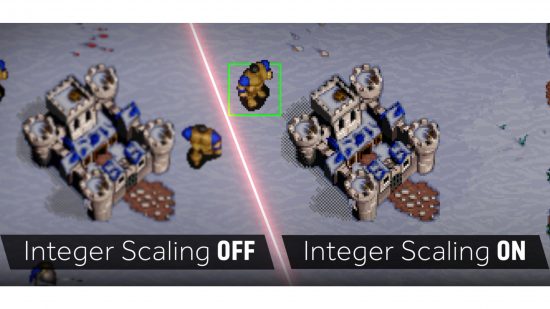

Now we know why scaling in general is necessary and non-trivial, let’s circle back to upscaling older gaming content designed for much lower-resolution displays. GPU vendors can use built-in hardware on their modern products, which can help to solve the problem in a new way that has great performance and preserves as much of the image quality of the original content as possible, addressing the two key pillars of any scaling system. It’s called integer scaling, so let’s dive in and see how it works.

Integer scaling explained

The best way to understand integer scaling is to first think about some of the other ways you could potentially scale up an image to a higher resolution. Imagine you have to map one input pixel to more than one output pixel – you need a mathematical way of transforming that single input pixel into those multiple outputs.

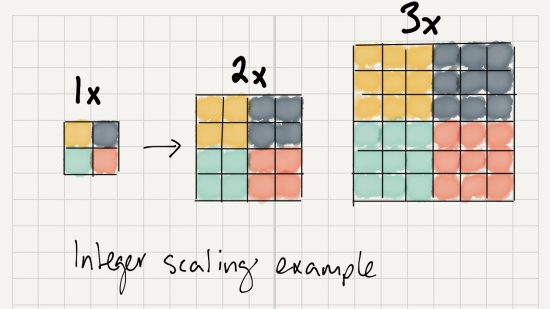

Let’s consider the simplest case: mapping a simple 4 x 4-pixel source image up to an 8 x 8-pixel target image. The source and target have the same aspect ratio, which is an important property for any scaler to consider, and the upscaled ratio is 2:1, resulting in four times as many pixels (2x more in both the horizontal and vertical dimensions)– 16 pixels in the source becomes 64 pixels in the target.

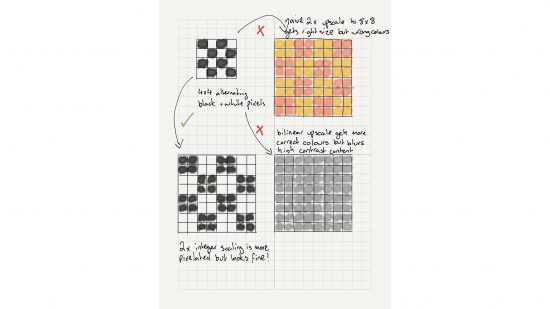

For the alternating pattern of pixels in the 4 x 4 source image, there are many ways you could take that source data and transform it into the 8 x 8 target. You could have a scaler that says for each white pixel in the source image you want to have four pink pixels, and for each black pixel in the image you want to have four yellow pixels.

Clearly, that’s going to give you an 8 x 8 image that’s upscaled from the 4 x 4 in a particular way, but not in a way that gives you a high-quality resulting image that preserves the look of the original. We can definitely do better.

Better upscaling filters

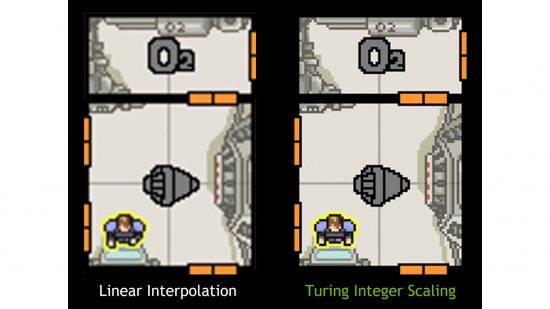

So, what about a method that takes an average of the colour of the 2 x 2 block of pixels near the source one, in order to generate more for an upscaled target? This is also known as a bilinear filter. That sounds good, since we need more pixels in the upscaled target, and while that approach will get you a more accurate representation than our white-to-pink and black-to-yellow swap from earlier, it’s still not going to work with high-contrast imagery.

For example, think of the average colour between white and black: grey, and imagine that averaging effect carried out over the whole image as it was upscaled, smearing the wrong colour over the image anywhere that it finds high contrast, which is what tends to happen between the edges of objects. Imagine Mario and Yoshi smeared into the flatter backgrounds of Super Mario World. Again, we can do better.

The obvious path from that kind of idea is an increasingly wide filter, which takes a look at a larger area around the source pixel to find information to blend together for the new target pixels. Take a step past that and you get a filter that’s content-aware.

We mentioned the high-contrast edges that do badly with the averaging bilinear filter earlier, so maybe our new upscaling filter can adopt a different method when it encounters regions of pixels that are clearly distinct from one another in their colour, and therefore have high contrast. Both those approaches can provide higher image quality than the simpler bilinear filter, but only for certain kinds of content.

Bespoke filtering for particular content

That leads us to thinking about creating bespoke filters, which are tailored to the kind of content being upscaled. Earlier, we talked about preserving the look and feel of older games designed for much older, lower-resolution analogue display technologies, such as CRTs. Knowing that those games have particular visual properties, and what they are, we can improve our filtering to make it more authentic.

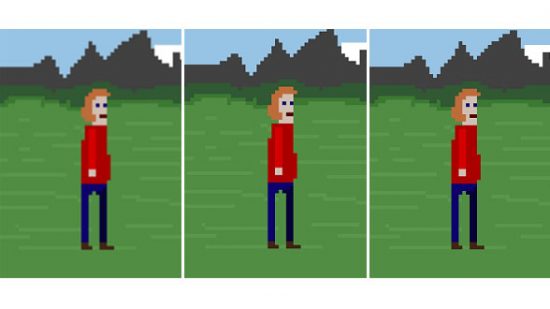

So, knowing that we’ve got that pixelated, blocky look in every part of older games, how do we preserve it, rather than smear it all over the screen, with our earlier attempts at filtering the source image?

The current best answer is almost so obvious that you might initially wonder why it hasn’t been the solution all along: for every input pixel that you sample, you just copy its colour wholesale to every target pixel. There’s no average blending, no looking further outside the pixel at its neighbours to try to do better, no contrast or other content-aware approach. Just a plain old copy of the original colour into the upscaled region – nothing more, nothing less. That’s integer scaling and it’s honestly that simple.

For every input pixel you want to upscale, you produce an integer multiple of new ones that are exactly the same in the upscaled image. So 2x integer scaling produces four new pixels in a 2 x 2 block for each input pixel, 3x integer scaling produces nine new pixels in a 3 x 3 block for each one, and so on.

There’s no fractional arithmetic or fancy filtering – you just copy the pixel blocks. There has to be a catch though, right? There’s a couple, sadly, and the aspect ratio of the original content, which we mentioned earlier in this feature, is the first one.

What’s the catch?

In the modern era, we’ve moved to a much wider format display than the old days. Back then, standard-definition TV was usually broadcast in 4:3, 3:2 (particularly the analogue NTSC spec used in the USA) or 5:4 (our competing PAL format) aspect ratios.

There’s a bit more to it than that too, because old analogue signals would hide information in the lines at the top that weren’t meant to be displayed, giving rise to the concept of overscan, an issue that still haunts digital TVs to this day. In general, though, we had fairly narrow formats back then, and old gaming systems would target displays in that format.

These days the most common digital display format by absolute miles is 1080p at a 16:9 aspect ratio, which is maintained by both 4K and 8K displays too. In the fringes of the modern gaming world, you’ll find displays with even wider aspect ratios that head towards the cinematic 2.35:1 or higher. The problem that besets a cinematic presentation on a 16:9 TV or display at home is the same one that affects integer scaling as a technique, even though we’ve identified that it’s a great upscaler for older more pixelated content: black bars.

Imagine a game system designed for PAL TVs that outputs 720 x 576 (5:4), and you want to integer-upscale it to your 16:9 or 4K display. Your 4K display is much wider, so stretching it out to fit is one technique, but that’s not what integer scaling does, so you need bars on either side of the original-format image that’s centred on your new display.

The same approach applies to old PC display formats, which were usually 4:3, with resolutions such as 640 x 480, or 800 x 600. Integer scaling up to a wider-format display is possible, but the scaling options are limited, meaning 640 x 480 can be 2x integer-scaled up to 1,280 x 960 on a 1080p display, or 2,560 x 1,920 on a 4K display, with black bars on either side.

Knowing about the black bars problem, and knowing that integer scaling means you can only scale in whole numbers, you can see that some source resolutions just can’t be integer-scaled to today’s wider-format displays because the maths doesn’t work out.

Take a resolution of 800 x 600, for example. You physically can’t integer-scale that up to a 1080p display, since that’s 1,920 x 1,080 pixels, while you could 2x scale in the horizontal direction, since that’s only 1,600 pixels; 2x in the vertical doesn’t fit because you’d need 1,200 pixels, more than the 1,080 available on your 1080p display. In these situations, which are more common than you might initially think on a 1080p 16:9 display, you’re out of luck.

Integer scaling really only shines on higher-resolution modern formats, such as 4K and above, since you need that target pixel space to do the job in many cases. That’s the second catch to which we alluded earlier, and it’s the reason why, if it’s so obvious that integer scaling is the right kind of scaling for certain kinds of content, it hasn’t really shown up until recently.

Integer scaling in hardware

There are ways to force your setup into integer scaling, though, ensuring pixel precision without blurring and smearing. Let’s start by taking a short dive into the ways in which hardware might implement support for integer scaling. Intel, Nvidia and AMD have all leaned on existing hardware in their GPUs in order to accelerate it, and there are many different approaches.

Probably the easiest way to do it is via the display controllers that live on the GPU. Their sole job is taking a frame buffer the GPU has generated, which represents a frame of rendering in your game, or one that the operating system has generated while drawing itself, and turning it into signals to pump over the wire to your display.

Display controllers tend to have scaling engines built into them to handle upscaling, because it’s always potentially a task that a GPU might need to perform. Modern display controllers are usually specialised for the general upscaling and filtering we mentioned earlier – the kinds that are good for some purposes, but not others.

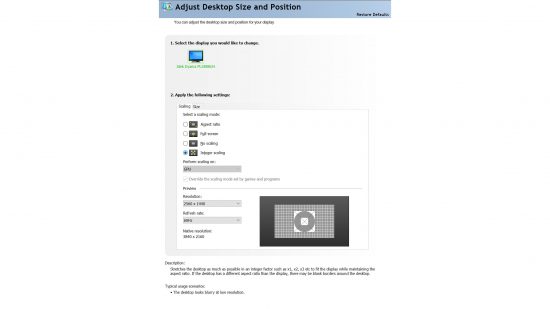

If a GPU maker is lucky, it’s a small matter of programming that display controller to just duplicate the incoming pixels into the square, integer-scaled regions as they go out over the wire. That’s great if your display hardware supports it. Intel takes this approach when possible, for example.

Asking the shader core instead

The fallback is just asking the GPU’s shader core to do it. In this case, the shader core draws the pixels anyway at the start, so it’s not too much of a job. It basically does this behind the application’s back as it draws at a lower resolution, and can then copy the output pixels to a larger scaled target image in the way we need for integer scaling to work correctly.

This method also requires no modification of the application or game drawing the source images.

AMD does it this way, which lets the company support the feature on a huge range of GPUs, stretching all the way back to the original GCN products introduced in 2012. AMD even supports this technique on Windows 7 as well as Windows 10, compared with Nvidia and Intel, which only support it on Windows 10.

Meanwhile, Nvidia limits shader core integer scaling support to Turing-family GPUs and later, and Intel limits it solely to 10th-generation Core products. AMD’s implementation also enables you to set integer scaling on a game-by-game basis, letting you limit using it with just the games that need it, unlike Nvidia and Intel. Intel’s technique in particular isn’t great because it applies to everything being rendered, not just the game.

Performance impact? What performance impact?!

There’s a minimal performance impact from this approach, because more memory bandwidth is consumed, and there’s a bit more shader processing that needs to happen, but on a modern GPU integer, scaling is effectively free for all intents and purposes.

The source resolution is low, so the extra work required is minimal enough to not matter most of the time, and target frame rates can likely always be met. We tested it out with some older PC game titles on an AMD Radeon RX 5700 XT under Windows 10 and noticed no real measurable performance hit.

If you own a high-resolution display and an OS and GPU combination that’s modern and permissive enough, integer scaling can breathe some fresh life into some older content on your modern system, as well as enabling you to play modern games at lower resolutions on a high-resolution screen without them looking blurry – great if you have a 4K screen for work, but only a low-powered GPU for gaming.

Limitations and the future

There’s also scope for combining integer scaling with other techniques. Intel recognises the limitations of integer scaling in its implementation, for example, and lets you combine it with ‘nearest neighbour’ upscaling after the integer scaling has done its work and, in some cases, where integer scaling can’t work at all, as we discussed before, replacing it entirely.

Conceptually, ‘nearest neighbour’ upscaling and sampling overlays the target pixel grid on top of the source one and, for every pixel that doesn’t perfectly map to a source one, or an integer-scaled source one, it just picks the nearest one to the sampling point. You lose some of the perfect crispness you get with integer scaling, but you support more source resolutions.

Some emulators also use techniques that not only upscale but also change the presentation of the content, so it looks like it’s running on a CRT, complete with scanline gaps, content-aware softening and luminance control to mimic the look of CRT phosphors. In addition, there are bespoke upscalers that are further tuned to the underlying content, and treat it differently while filtering to enhance and preserve the original look as much as possible.

Those techniques are usually implemented on the GPU using shader programs to let them be flexible and programmable, so there’s no real scope for them being baked into silicon, but it’s possible for GPU vendors to lift some of that innovation into the driver to make it available to game content outside of emulation. This could include older PC content that runs on modern PCs, but was just designed for the CRT era and much lower resolutions. Maybe that will happen in the years to come.